What is Osquery?

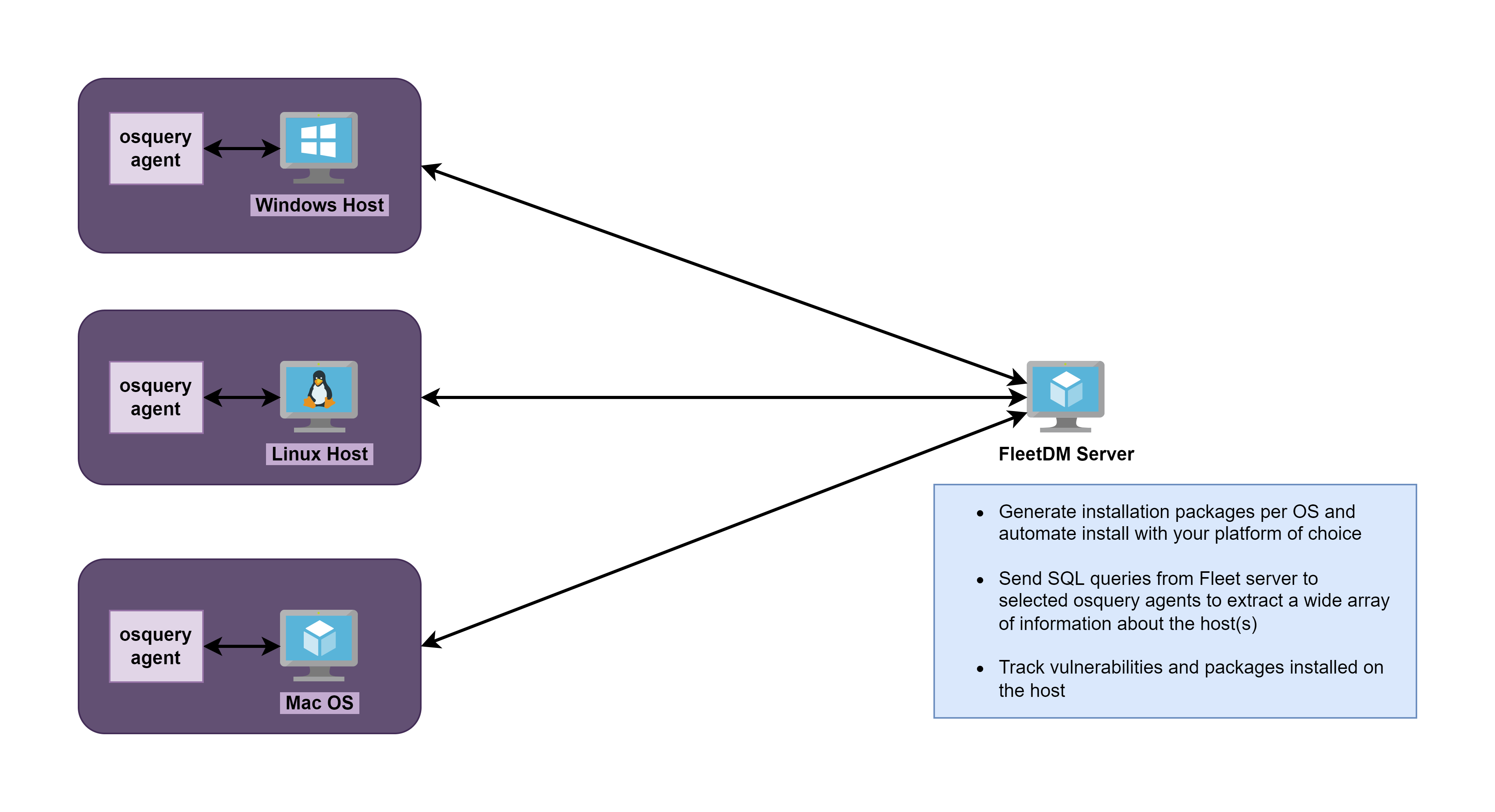

Osquery is an open source endpoint analytics tool that can query a massive variety of information about a host using SQL queries. Effectively, osquery catalogs information about a host in a relational database.

It has many applications from cyber security, to IT operations, and policy enforcement. When combined with a SIEM or a centralized management server, osquery becomes a formidable tool in the cybersecurity operator and system administrator's toolbox.

What is FleetDM?

FleetDM is an open source fork of the Kolide Fleet server. This central management server allows you to deploy and control your osquery endpoints at scale. An operator can log into the FleetDM server and run multiple SQL queries against multiple osquery endpoints at scale. It is incredibly powerful.

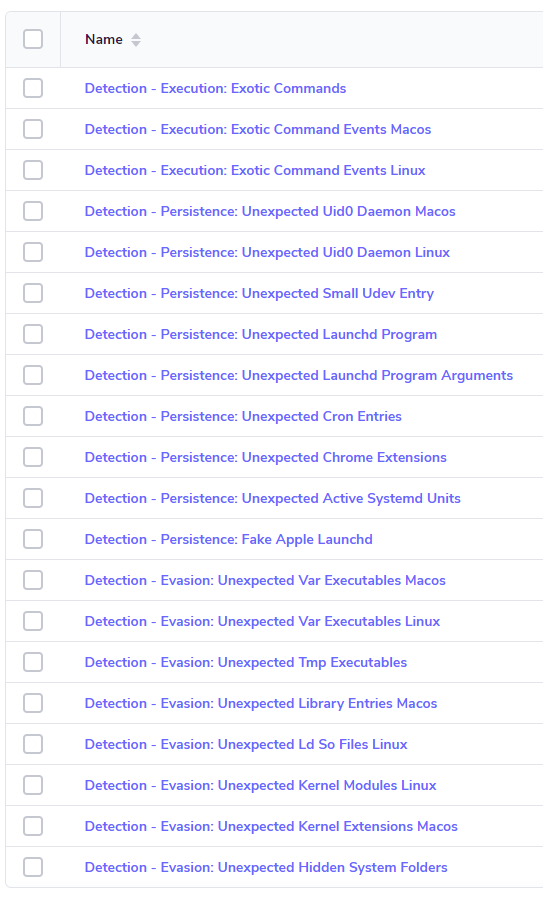

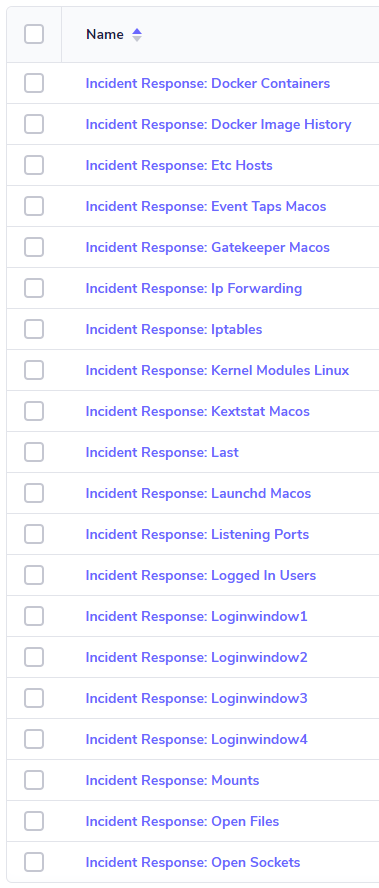

Threat Hunting Queries

A couple days ago (as of this writing), Thomas Strömberg announced that Chainguard had open-sourced their threat hunting queries that they use with osquery.

📢 I'm proud to announce that we've open-sourced our #osquery detection & response ruleset: https://t.co/IsNvtzzn8z

— Thomas Strömberg (@thomrstrom) October 20, 2022

It contains 130+ production-ready queries we found useful for detecting malware & other anomalous behavior on our endpoints, designed with alerting in mind. 🚨

The only problem for me is that I wanted a way to get them into FleetDM, so that I could run the queries from the control server. Now, I am not aware of any straightforward way to take the .sql files from the GitHub repo and convert them into a YAML document for import into the FleetDM server. Although, I would love it if someone would correct me on that.

Bulk Importing the Queries and Policies

Creating the YAML Documents

I got the idea to use the fleetctl apply tool to bulk import the queries from a YAML doc, as this is something you can do when installing FleetDM to import some standard queries.

I forked their GitHub repo and got to work on a PowerShell script that would parse the .sql files and convert them to the YAML template that could be used to import them with fleetctl .

The PowerShell script is designed to be idempotent. So, regardless if you run the script once or many times, existing queries should remain in the template, while new queries are added to the template file.

With that being the case, you can safely import the YAML document multiple times, as the fleetctl command will not create duplicate entries, but will update the existing query name with the same query – effectively overwriting the data with identical data.

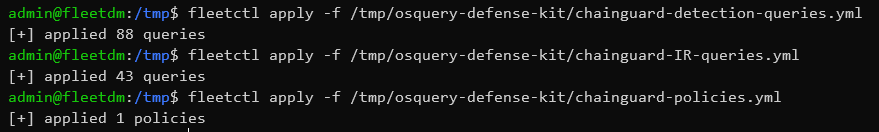

Importing the YAML Documents

The first step would be run the script to generate the YAML documents (or you can use the existing ones in the repository).

cd osquery-defense-kit

pwsh -f ./Out-FleetYamlTemplate.ps1Once you've run the script and the .yml documents are generated, it's time to import the queries into FleetDM. I show you how to do this in my notes here, except, instead of importing the standard query pack, you'll import the new YAML documents.

Once the import is complete, you can log into FleetDM and check out your new queries.

Updating the Query Packs

cd osquery-defense-kit

# Delete the existing queries & policies

# In the past, the maintainers have renamed existing queries

# This causes duplicate queries with different names

fleetctl delete -f chainguard-detection-queries.yml

fleetctl delete -f chainguard-IR-queries.yml

fleetctl delete -f chainguard-policies.yml

# Remove the YAML docs, as new ones will be generated

rm chainguard-detection-queries.yml

rm chainguard-IR-queries.yml

rm chainguard-policies.yml

# Pull the latest query files

git pull

pwsh -f ./Out-FleetYamlTemplate.ps1

fleetctl apply -f chainguard-detection-queries.yml

fleetctl apply -f chainguard-IR-queries.yml

fleetctl apply -f chainguard-policies.ymlIngesting Osquery Logs into Wazuh

Fleet Osquery Logging Overview

You can run queries on registered osquery endpoints in the following way using Fleet DM:

- Ad-hoc queries (live queries)

- Scheduled queries

Live Queries

When running ad-hoc queries, you click the Queries menu and choose a query from the list of available options. Then, you check the host(s) or group of hosts to run the queries against.

This sends a live query to the osquery agent running on the target host and the results from SQL query are streamed back to the Fleet DM server.

Any results from ad-hoc queries will be logged at /tmp/osquery_result on the Fleet DM server; not on the endpoints.

Scheduled Queries

Scheduled queries are run at regular intervals on groups of hosts. A scheduled query can be set to one of two types:

- Snapshot

- Differential

In summary, a snapshot query is a complete set of results at anytime the query is run. A differential query — on the other hand — is only going to log results that are different from the previous run.

Official documentation with more detail on the query types

On a default Fleet DM installation, scheduled queries are logged at the following locations on osquery endpoints:

Snapshot Queries

- Linux:

/opt/orbit/osquery_log/osqueryd.snapshots.log - Windows:

C:\Program Files\Orbit\osquery_log\osqueryd.snapshots.log

Differential Queries

- Linux:

/opt/orbit/osquery_log/osqueryd.results.log - Windows:

C:\Program Files\Orbit\osquery_log\osqueryd.results.log

Reading Logs with Wazuh Agents

Logically speaking, it is most likely that the same hosts where you have your Wazuh agents installed are also going to be configured with osquery endpoints. Therefore, it makes it easiest, and the most sense to push the log collection directives to the default Wazuh group.

The default group's agent.conf file in Wazuh is split into two entities — one for os="linux" and one for os="windows". We can place the <localfile></localfile> directives in each respective section.

<agent_config os="linux">

<localfile>

<location>/opt/orbit/osquery_log/osqueryd.results.log</location>

<log_format>json</log_format>

<label key="log_source">osquery</label>

<label key="osquery_log_type">differential</label>

</localfile>

<localfile>

<location>/opt/orbit/osquery_log/osqueryd.snapshots.log</location>

<log_format>json</log_format>

<label key="log_source">osquery</label>

<label key="osquery_log_type">snapshot</label>

</localfile>

<!-- removed by author for brevity -->

</agent_config>

<agent_config os="windows">

<localfile>

<location>C:\Program Files\Orbit\osquery_log\osqueryd.results.log</location>

<log_format>json</log_format>

<label key="log_source">osquery</label>

<label key="osquery_log_type">differential</label>

</localfile>

<localfile>

<location>C:\Program Files\Orbit\osquery_log\osqueryd.snapshots.log</location>

<log_format>json</log_format>

<label key="log_source">osquery</label>

<label key="osquery_log_type">snapshot</label>

</localfile>

<!-- removed by author for brevity -->

</agent_config>Click 'Save' once you've updated the configuration

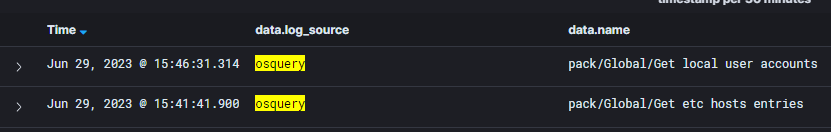

Once you have saved the agent.conf configuration, this will be pushed to the Wazuh agents and they will begin ingesting the target log files.

Wrapping Up

If you've followed along with me to the end, thank you for reading. And thank you to the folks at osquery (Meta), FleetDM, and Chainguard for their contributions to the open source community.